Case Study: Atlantic Technological University - Precision-Timed Multi-Modal Sensing for Human Motion Research

- Ross McMorrow

- 1 day ago

- 5 min read

Overview

Researchers at the Machine Intelligence Lab (Mi-Lab) at the Atlantic Technological University are developing a precision-timed sensing platform for human motion understanding, bringing together wired and wireless IMUs, camera-based pose estimation, and LiDAR within a common synchronisation framework. The platform is designed to support research in group activity recognition, multi-sensor fusion, and 3D pose estimation, where accurate temporal alignment across distributed sensing systems is critical.

At the core of the deployment is a shared timing infrastructure based on a single grandmaster reference, with time distributed through NTP, PTP, PPS, and NMEA services. This timing layer is a key part of the platform, allowing sensing devices, LiDAR streams, vision pipelines, and associated processing nodes to operate against a common clock rather than relying on independent local time bases. In turn, this supports more consistent alignment across heterogeneous data sources and more controlled experimental conditions.

The result is a research platform that supports more repeatable experimentation in complex motion-analysis scenarios, while creating a robust foundation for future work in synchronised multi-modal perception.

Figure 1: The Mi-Lab platform integrates wired/wireless IMUs, camera pose estimation, and LiDAR under a single Timebeat synchronisation hub.

Deployment Context

Research in human motion understanding is moving toward increasingly multi-modal and distributed sensing environments, where inertial, visual, and ranging data must be interpreted together. In areas such as group activity recognition, 3D pose estimation, and sensor fusion, success depends not only on the quality of each sensing modality but also on the ability to align observations across the entire system in time.

In practice, this is a significant systems challenge. Wired and wireless IMUs, camera pipelines, and LiDAR sensors often differ in sampling behaviour, transport latency, internal processing, and clocking mechanisms. These differences can introduce ambiguity when comparing motion events across devices, particularly in experiments involving coordinated human activity or temporally sensitive fusion workflows.

The deployment was therefore designed around a shared timing infrastructure built on a single grandmaster reference, with time distributed through NTP, PTP, PPS, and NMEA services. By treating timing as a core part of the sensing architecture rather than an afterthought, the platform provides a stronger foundation for controlled experimentation in synchronised multi-sensor perception

Architecture Approach

The sensing platform was architected as a modular, precision-timed research environment capable of integrating multiple sensing modalities under a common timing framework. The goal was to support complex human-motion experiments without relying on loosely aligned device clocks or post hoc approximation of event timing.

The deployment combines wired and wireless IMUs, camera-based pose estimation, and LiDAR across a distributed sensing and compute stack. Because these modalities differ in data rate, transport behaviour, internal processing, and acquisition pathway, the architecture places particular emphasis on timing as a system-level design feature. A single grandmaster reference is used to underpin the platform, with timing distributed through NTP, PTP, PPS, and NMEA-based services to support temporal consistency across sensing and processing nodes.

By aligning inertial, visual, and ranging streams to a shared temporal framework, the architecture provides a robust foundation for research in group activity recognition, 3D pose estimation, and multi-modal sensor fusion. At the same time, its modular structure allows the platform to evolve as new devices, sensing pathways, and experimental workflows are introduced.

Figure 2: Time distribution architecture — a single grandmaster clock distributes synchronisation via NTP, PTP, PPS and NMEA to all sensing and compute nodes.

Engineered Synchronisation Distribution

Synchronisation was engineered as a core service within the platform, enabling distributed sensing and processing elements to operate against a shared temporal reference rather than a collection of independent local clocks. This was an important design decision, as the platform spans multiple sensing modalities, transport paths, and compute nodes, each with different timing characteristics.

To establish this common time base, the deployment uses a combination of NTP, PTP, PPS, and NMEA-based time distribution under a single grandmaster architecture. Together, these services provide the timing framework needed to coordinate wired and wireless IMUs, camera-based pose estimation pipelines, and LiDAR-integrated workflows within one experimental environment.

This engineered timing layer helps reduce ambiguity in cross-device event ordering and supports more reliable temporal alignment across heterogeneous data streams. For research in group activity recognition, 3D pose estimation, and multi-sensor fusion, this provides a more robust foundation than approaches that depend primarily on local clocking or offline timestamp approximation.

Figure 3: Without synchronisation, sensor streams drift independently, causing ambiguous event ordering. With Timebeat, all streams align to a shared temporal reference.

Experimental Validation

The platform has been used in a series of controlled motion-capture and sensing experiments designed to evaluate synchronised operation across multiple modalities. These experiments brought together wired and wireless IMUs, camera-based pose estimation, and LiDAR-supported sensing within a shared timing environment, allowing the research team to observe how distributed sensing streams behaved during common motion scenarios.

Validation focused on the practical challenge of relating motion events across heterogeneous systems operating at different sample rates, over different transport paths, and with different internal processing characteristics. By using a common temporal reference across the platform, the experiments provided a structured basis for assessing consistency of capture, alignment of observations, and repeatability of multi-modal recording workflows.

These validation activities were designed to support broader research in group activity recognition, 3D pose estimation, and multi-sensor fusion, while also establishing a reliable experimental foundation for future quantitative analysis of synchronised sensing performance.

Results

The project delivered a working platform for synchronised multi-modal sensing across distributed devices, supported by a shared timing framework. Initial deployment and validation have established the practical operation of the timing infrastructure and demonstrated its value as a foundation for controlled sensing experiments.

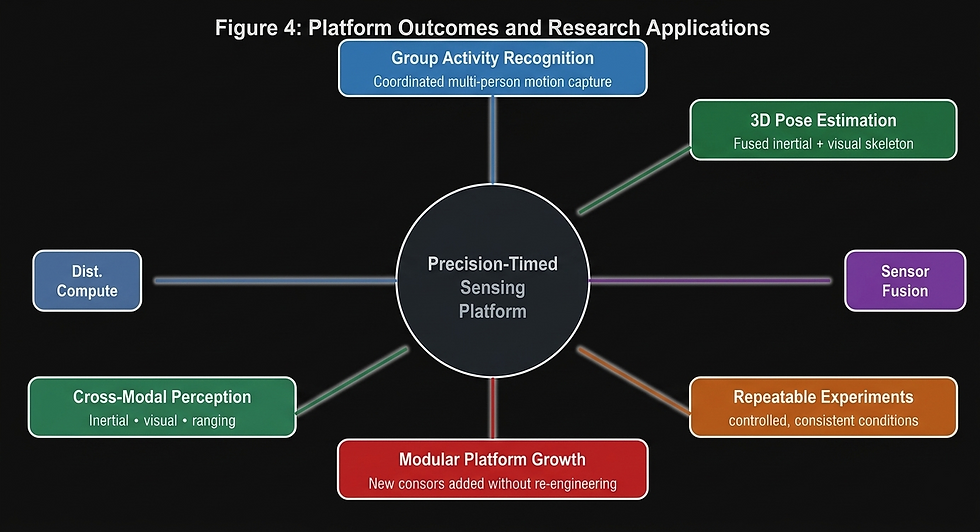

The result is a stronger experimental base for ongoing work in group activity recognition, 3D pose estimation, and multi-sensor fusion, while also providing a platform for future quantitative investigation of timing, alignment, and cross-modal perception performance.

Figure 4: Key research outcomes enabled by the precision-timed platform, spanning activity recognition, pose estimation, sensor fusion, and modular platform expansion.

Why This Matters

As human-motion research becomes more dependent on distributed, multi-modal sensing, timing is no longer just a technical detail in the background. It plays an important role in how confidently motion events can be related across devices, how reliably sensing streams can be fused, and how repeatable experimental results can become over time.

By building timing into the platform from the outset, this work creates a stronger foundation for research in group activity recognition, 3D pose estimation, and multi-sensor fusion. It also highlights the value of treating synchronisation as part of the wider sensing infrastructure rather than as a problem to be addressed only after data has already been captured.

More broadly, the platform demonstrates how precise shared timing can support the next generation of human-motion and perception research, particularly in environments where multiple sensing pathways must work together in a controlled and interpretable way.

Looking Ahead

This platform provides a foundation for future work in synchronised multimodal perception, including expanded studies of group activity recognition, 3D pose estimation, and the integration of inertial, visual, and ranging data within shared experimental workflows.

Future development will focus on extending the sensing environment, refining cross-modal integration, and supporting deeper quantitative analysis of timing, alignment, and perception performance across distributed systems. Detailed experimental findings will be reported separately through formal academic publication.

In this way, the current deployment represents both a practical research capability and a foundation for further work on precision-timed sensing in complex human-motion environments.

Great point made - as human-motion research becomes more dependent on distributed, multi-modal sensing, timing is no longer just a technical detail in the background. It plays an important role in how confidently motion events can be related across devices, how reliably sensing streams can be fused, and how repeatable experimental results can become over time.